Conversational Design: What Hundreds of Prompts Taught Us About Customer-facing AI

How can many agents, each with distinct goals, speak with one voice and resonate with human users?

Executive Summary

- Customer-facing AI represents your brand and the craft required is categorically different from internal tooling.

- The difference between a functional agent and a convincing one is more conceptual than technical because most prompts are written as instruction sets, not as designs for dialogue.

- A better frame comes from linguistics and cognitive psychology: conversations have structure, and prompts create an attentional environment. What you put first, what you repeat, and how you close will reliably shape the agent’s behaviour.

- Constraints help but only when they’re precise. Too many edge cases produce stilted interactions and unwieldy prompts so having fewer decisions to make provides focus on the more important ones.

Building conversational, customer-facing AI is daunting for a business.

Even for hyper adopters of the technology who’ve embraced AI collaboration at the individual employee level and perhaps even developed custom AI tooling for internal use, promoting AI to the interface between your business and the world is another matter entirely.

And it is a promotion. When AI goes customer facing, it graduates from industrious intern to endorsed employee, putting on the uniform and representing the brand in the eyes of customers.

Stakes are high. Each missed step is now public and potentially recorded.

At Tomoro, the products we build are often multi-agent systems with complex interactions at the business level. However, that logic can be the easier part. The greater challenge is convincing users that they’re talking to one agent the whole time, and that what the agent has to say is worthwhile.

So we write and rewrite prompts, again and again.

Some agents are hundreds of prompt iterations in the making. Each has the same goal: stay on task and speak with a voice that resonate with human users.

Our experience of the prompting refinement process has led us to become creative when searching for inspiration. We’ve started borrowing from other disciplines. Not everything has worked, of course, and that which has worked has rarely landed at the first attempt. The result of these exploratory efforts has been the emergence within our team of a different paradigm from prompting.

Prompting Insights from Linguistics and Cognitive Psychology

The gap between a systematically functional agent and a conversationally brilliant one isn't purely technical, it’s conceptual. It’s the distance between the world in which you’ve positioned the agent via your prompt and the real world of human communication in which the agent operates.

The latter is defined by its ambiguity, to which humans are well attuned and which good-faith communicators navigate co-operatively via a set of subtle, unspoken rules of discourse. The ill-equipped agent is obvious.

To preserve the fourth wall, or the Turing illusion, we can ground our agents in the art of dialogue using insights from linguistics and cognitive psychology.

Linguistics has a whole toolkit for studying language in action, including Conversation Analysis, which looks at how people structure and manage conversations moment by moment. Applied to prompting, this framework gives us a vocabulary for thinking about conversation, not as an exchange of words, but as an exchange of actions.

Some actions are practical, like getting or giving information, while others are social, like maintaining rapport or avoiding friction. Such moves take place within the broader structure of dialogue and its phases, each of which has expected actions. Dialogues have openings and closings, but also topic transitions and even pre-transitions — those sometimes awkward moments when a new direction is subtly proposed, negotiated, and decided between the lines.

Miscommunication happens all the time, even when all parties have the best intentions. While people excel at the art of dialogue to varying degrees, the point is that the LLM behind your agent doesn’t come prepackaged with the intuition possessed by even the most average human communicator.

How to Harness Attention and Achieve Constrained Clarity

Alongside the linguistic analysis, cognitive psychology offers something complementary. It tells us how attention, memory, and decision-making actually work — how people weight what comes first versus last, how specific triggers produce reliable behaviour, how constraints can liberate by reducing cognitive load.

A prompt is not a neutral container for instructions. It’s an attentional environment. So, what sits at the top matters. What sits at the bottom matters. What is repeated matters. Given a list, people tend to remember the beginning and the end more readily than the middle. Psychologists call this the serial position effect: primacy and recency.

In practice, we see something similar with LLMs. The earliest guidance establishes the frame: who the agent is, what role it is playing, what kind of world it expects to encounter. Closing guidance often behaves like “the last thing it hears”. If we put our most important instructions there, they have a better chance of playing out.

While we carefully consider where to position various instructions within our prompt, so too should we track the overall shape of the boundary those instructions are creating for our agent.

Instructions work as constraints on our agent, reducing the number of competing possibilities it has to resolve and narrowing the overall problem space. In our prompts, we need enough constraint to make the agent’s task manageable.

When instructing human employees, especially less experienced ones, psychology tells us we would be wise to disambiguate the task at hand and thereby reduce cognitive load as much as possible. While constraints may strike us as inhibiting freedom, having fewer decisions to make frees us up to focus on the few important decisions that remain and apply creativity to executing our actions within limits.

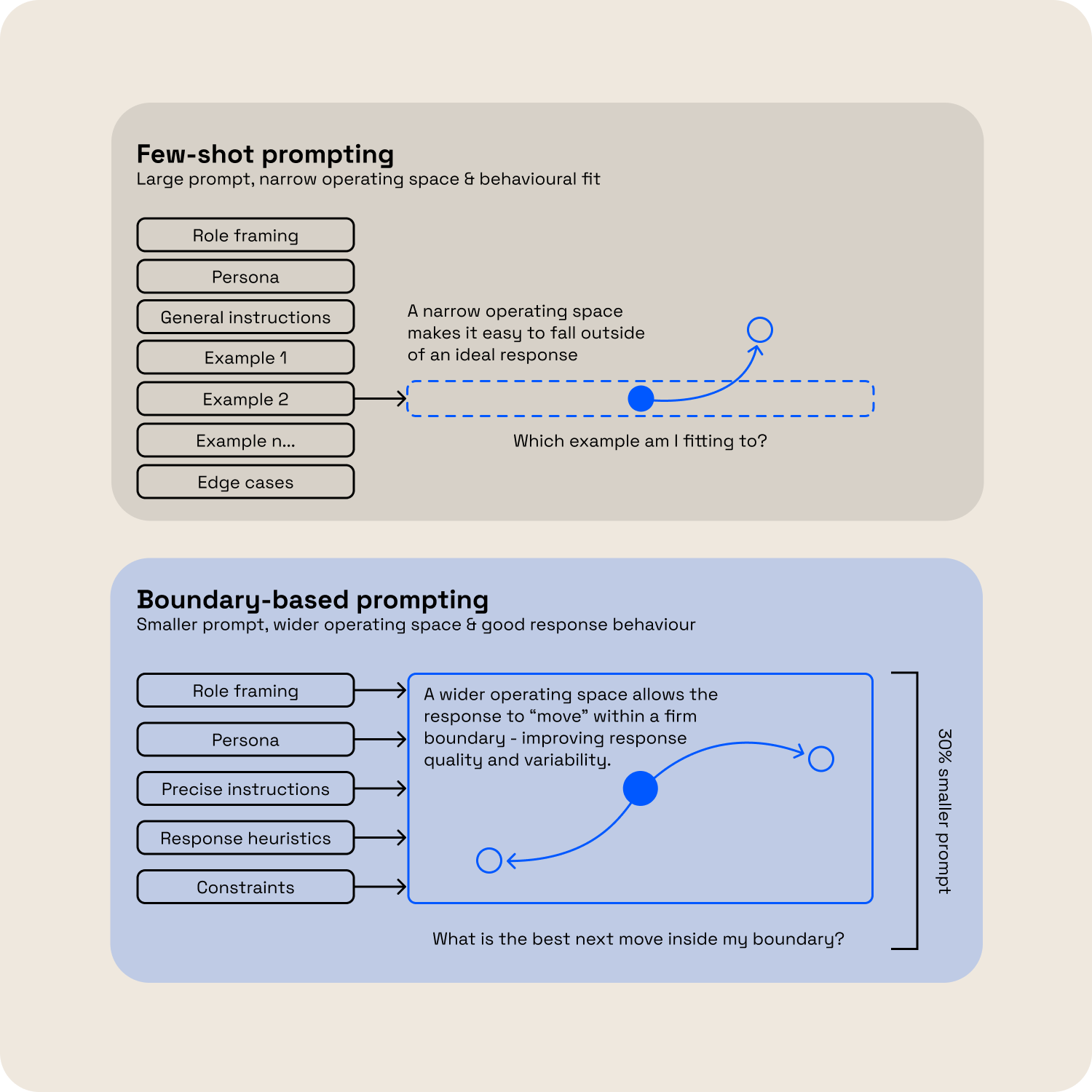

Conversely, trying to give our agent zero decisions to make by covering every conceivable edge case with lists of example behaviours is a good way to create a stilted conversationalist, not to mention an unwieldy, Frankenstein prompt of ifs and elses that’s a nightmare to manage.

(Few-shots, we’re looking at you.)

A well-bounded prompt provides enough clarity of direction, without overloading the brief and paralysing the agent. Often this means introducing fewer edge cases or targets for the agent, both in terms of what or what not to say and how or how not to say it.

Few-shot versus Boundary-based prompting

In our experience, if you’re reaching for a few-shot to adjust your boundary, your boundary lacks precision. Get back to the core instructions and refine them.

Designing the Conditions for Better Dialogue

From the cognitive psychology perspective, we’ve been reflecting on where we should place different instructions within a prompt and considering both the quantity and quality of instruction required to best bound our agent’s problem space.

Recalling our earlier observations on the art of dialogue from the linguistics perspective, we can round out our prompting paradigm by framing dialogue-driven instruction as part of the broader task boundary for our agent.

For customer-facing AI, dialogue is the meta-task, always, whether or not we acknowledge it. When we choose to address dialogue intentionally in our prompt, we commit to channeling part of our agent’s bandwidth or “cognitive load” to dialogue awareness and responsiveness in a way that will improve its conversational ability.

However, the tradeoff is that this will likely require us to reduce the size of the main task to maintain a manageable, well-bounded problem space overall.

So, for customer-facing AI, we design the conditions for better dialogue and decisions holistically. We aim for smaller, well-bounded agents, whose main task is contextualised within or inclusive of the meta-task of dialogue. We don’t write piles of instructions that get refined through examples. We write a smaller set of refined instructions with the precision to make examples redundant.

This is the prompting paradigm we use to create agents that not only stay on task, but that do so with conversational elegance.

With the conceptual landscape mapped, we’ll spend our next blog getting more practical. We’ll look at some key patterns that have emerged in our efforts to apply these principles to prompts through real-world trial and error. Interestingly, frontier model providers are beginning to reflect similar patterns in their own prompting guidance. More next time.