Introduction

This blog explores our experience, and lessons learned, of building retrieval augmented generation (RAG) based large language model (LLM) applications at scale.

Traditional RAG based LLM applications typically employ some form of cognitive search during the retrieval phase of the execution. When retrieving from a corpus of unstructured data, the most standard approach taken is to employ vector search.

While this approach works well at the prototype scale; there are several issues that often crop up when scaling the application. Below, we discuss the use of graphs to curate unstructured data, in tandem with LLMs, and overcome these challenges.

This is part 1, where we set out the overall approach. If you’d like to see a worked example, go to part 2.

The vector search approach

Vector search is very commonly used to perform “semantic search” on unstructured data.

In this approach, the unstructured data is processed through an embedding function and the resulting vectors are stored in a data store. During the retrieval phase, the query is also embedded (often using the same embedding function) and a ‘vector search’ operation is performed.

This operation typically calculates a distance between the query vector and the vectors in the data stores. The closest documents, i.e. documents that are most similar to the original query, are found, as these are expected to contain the context most relevant to the query.

The closest documents (in plain text) are then provided as the context along with the original query - this step is the “augmentation” part of the RAG pattern. A LLM then uses the context contained in the document to answer the query - this is the “generation” portion of the RAG pattern.

Issues with vector search

When implementing at scale, several problems emerge; for example:

Distributed context

Unstructured data is often created in a local context. For example, an email document might assume that the reader is aware of the previous emails in the chain. When unstructured data like this is stored in a vector database, the context is often spread across numerous documents.

During the retrieval phase, the query will weakly match many documents that discuss the subject. This can result in large amounts of tokens returned as context and will add to LLM completion cost.

This maybe acceptable in use cases where the objective of the application is to summarise, however if the use case requires a specific answer to a question, the added noise in the context window can degrade the quality of the answer generated by LLMs.

Right sizing the documents

When storing embeddings in a vector datastore, the developer has to make a choice on how to partition the unstructured data. This is done to ensure that the document size is not overly large or too small.

If the document is overly large, then the same document will be returned for numerous queries and the token length of the context window will be noisy and unnecessarily large. Conversely, if the document is too small, the context required to answer the query might be split across multiple documents and the retrieval step may miss returning all the relevant documents to answer the query.

There is no “correct” answer on how to correctly partition the data. The optimal document sizing is dependent on a number of factors, including the type of queries expected by the application.

Thus, the modelling decision made when curating the vector database can become tightly coupled to the use cases and reduce the number of applications that can use the same vector index.

Conflicting information

If the vector store contains conflicting information, these will be returned during the retrieval phase. When storing conflicting information in a vector search; there is no elegant way to identify and resolve factual conflict.

Graph database approach

An alternative to the embedding and vector search is to curate the data in graphs. This process applies techniques like named entity extraction (NER) and tools like large pre-trained models fine-tuned on entity relationship extraction from unstructured text.

Extracted entities and their relationships are modelled as nodes, attributes and relationships in a graph database. This approach allows a concise curation of unstructured data in a graph which can traversed at the time of retrieval. Each node and relationship can be tested for relevance to the original query and be collected in the context. Additionally, the links in the graph can be traversed for additional information based on relationship types.

For example, consider this collection of unstructured information spread across multiple documents:

Text from newspaper article (Document 1):

“George Martin, born on 3rd Jan 1926, celebrated his 75th birthday…… (continues for 2 pages)”

Text from interview transcript (Document 2):

“George married his wife in a small ceremony in 2011…. (continues for 4 pages)”

Text from database export (Document 3):

“George lives with his wife Parris McBride in Santa Fe, New Mexico…..”

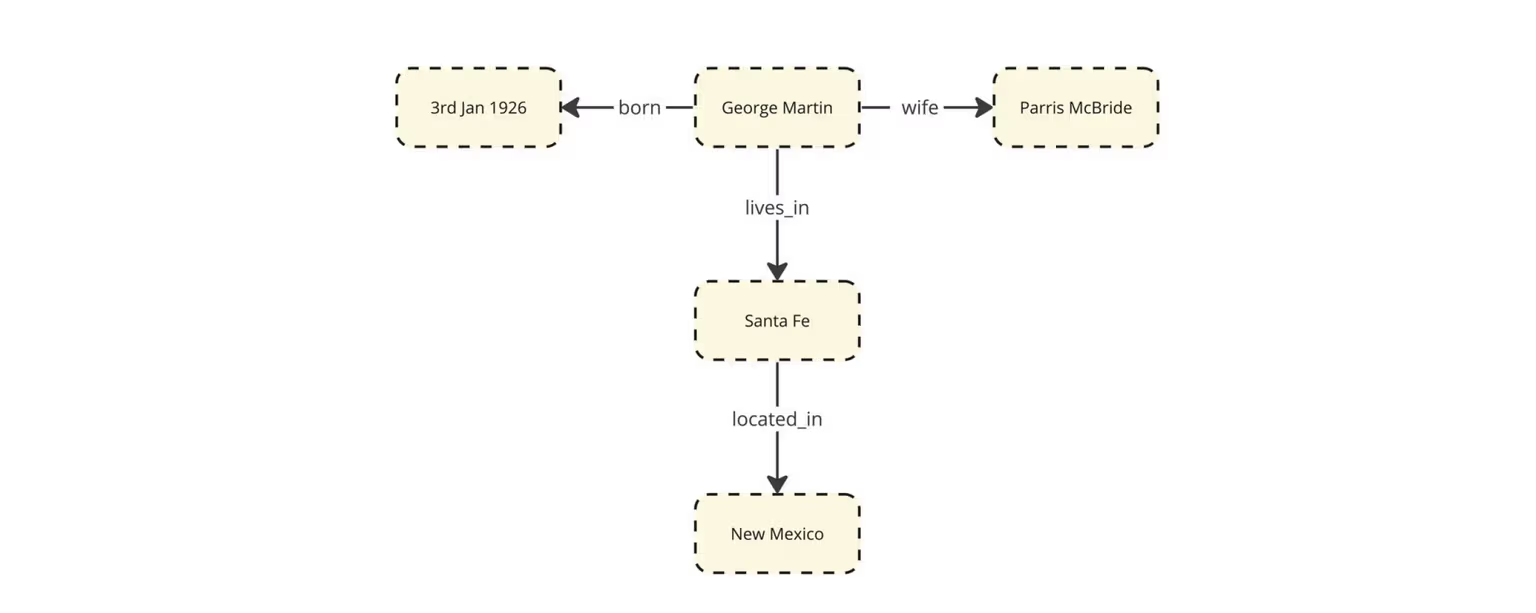

When modelled as a graph, this information from various documents will likely come together as follows:

This approach of curating the data is very helpful when collecting context to answer specific questions like - “Is George Martin married?”

Each relationship from core subject “George Martin” can be analysed independently and cherry-picked into the context during retrieval stage.

Contrast, this with vector search approach which would have returned Document 2 and Document 3 during the retrieval stage.

Also note that at the time of adding new information to this graph mode, we get an opportunity to interrogate existing data and check for information integrity. For example, if an old text references a previous marriage to his now deceased wife, Gale Burnick; we will get a commit conflict when creating that relationship in the graph. This gives us a natural opportunity to apply data quality measures.

In the next blog we’ll demonstrate these concepts through a worked example.