How To Implement Controls When Your Chatbot Runs Wild

accuracy detecting attacks

prompt injection or jailbreaking

- A leading B2C firm launched a customer-facing chatbot, intended to make communication simpler and quicker without long delays with call-centre staff.

- Instead, lacking the proper controls, it mocked the company and its services and was promptly disabled.

- In just 6 weeks, we rebuilt the controls and safety layers around the chatbot to catch 99%+ of attacks to prevent weird responses. This gave the customer an effective tool to speak with customers, alleviated busy resources to focus on higher value tasks, and a foundation for future AI capability.

What happens when a chatbot goes off the rails? It started as a well-intentioned plan to give customers bespoke updates and alleviate pressure on contact centres, all through natural conversation.

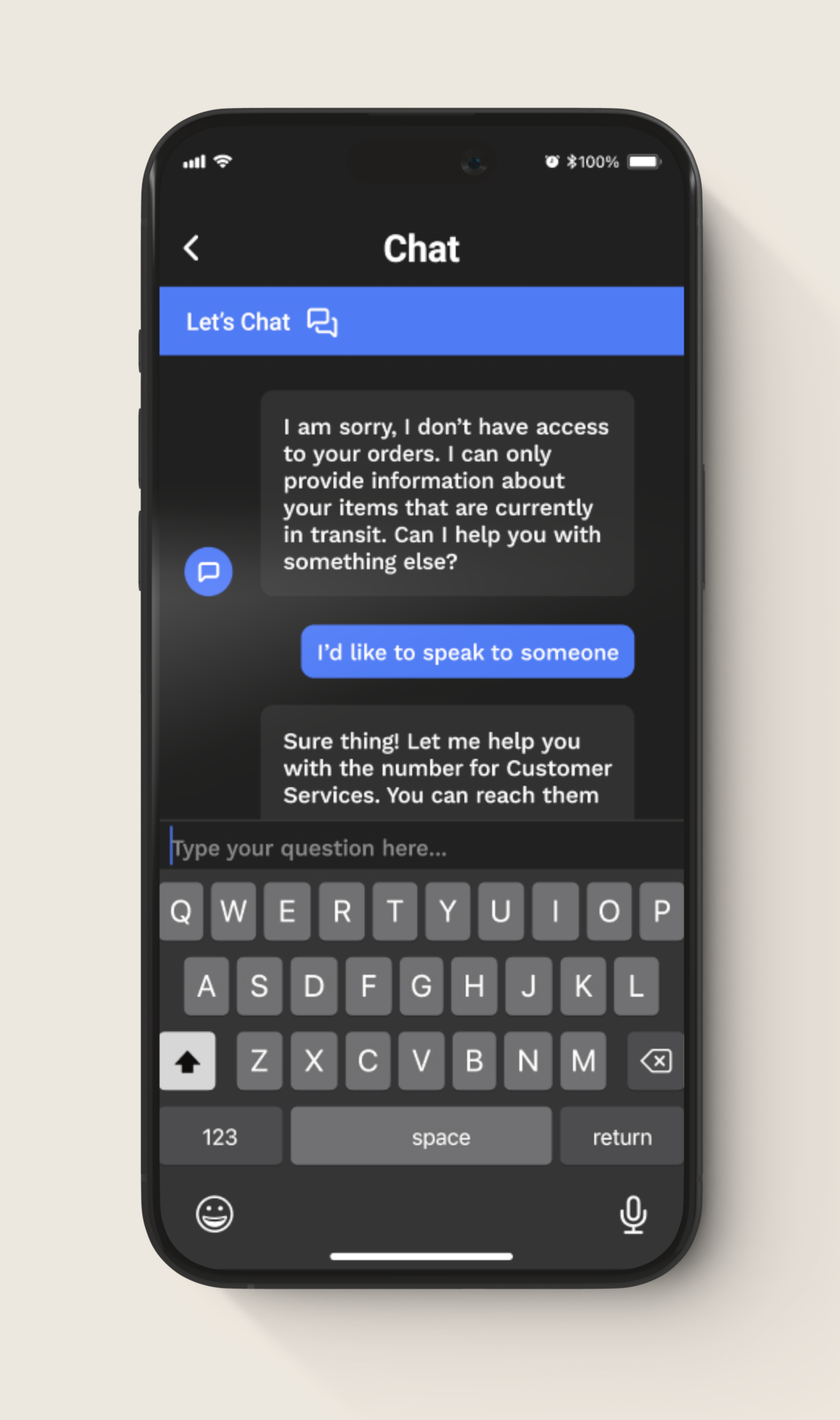

A leading B2C organisation launched a customer service chatbot to help millions of people receive product updates through natural conversation. The idea was to let customers get help faster and reduce pressure on contact centres. Not to poke fun about poor customer experiences…

Upon launch, the internet did what the internet does. People immediately started testing the limits of the new chatbot, trying to jailbreak it, coax out weird responses, and generally see what they could make it say. After one particularly public incident, the international B2C organisation disabled part of the chatbot and immediately investigated the problem.

They needed to rebuild their safety layer and launch again. Working with Tomoro, a system that caught 99%+ of both simple and sophisticated attacks was ready in six weeks, helping to prevent undesirable chatbot responses and bringing a new level of safety to their service.

How Do You Let an AI Talk To Millions Of People Without It Going Badly Wrong?

Our customer’s team faced a question every company with publicly-facing chatbots comes across: while the technology works, but how do you actually control it at scale?

Here's what kept them up at night:

- Reputation risk in the wild. Put a chatbot in front of consumers and some will try to break it. Injecting prompts, baiting it into off-brand responses, or just seeing what happens when they push boundaries.

- Gaps at the edges. The chatbot worked fine most of the time in English, but started showing cracks in other languages and specific contexts (like interactions involving delivery drivers), where the rules get more complex.

- Black-box guardrails that couldn't keep up. The existing safety tools, including third-party prompt injection blockers, didn't give teams enough visibility or flexibility to respond when new attack patterns emerged.

- Speed and cost mattered. Any safety layer needed to work in real time without making the chatbot sluggish or running up huge cost.

How We Tackled It: Measure The Problem Before You Solve It

We started with the principle: “you can't protect what you haven't tested”.

First, we tried to break it ourselves.

We ran structured red teaming against the existing chatbot. Multi-language attacks, edge-case scenarios, mode-specific exploits. We mapped out exactly where it held up and where it didn't.

Then we looked under the hood.

Beyond just throwing weird prompts at it, we reviewed the whole architecture. Where user input enters, how different tools and agents get triggered, what moderation was already running, and where the gaps were.

Finally, we built something specific to customer.

Generic content moderation tools are built for everyone, but with a large client base our customer needed controls tuned to their brand, their risk tolerance, and their actual operating environment. And they needed to be able to adjust quickly as real users showed them new problems.

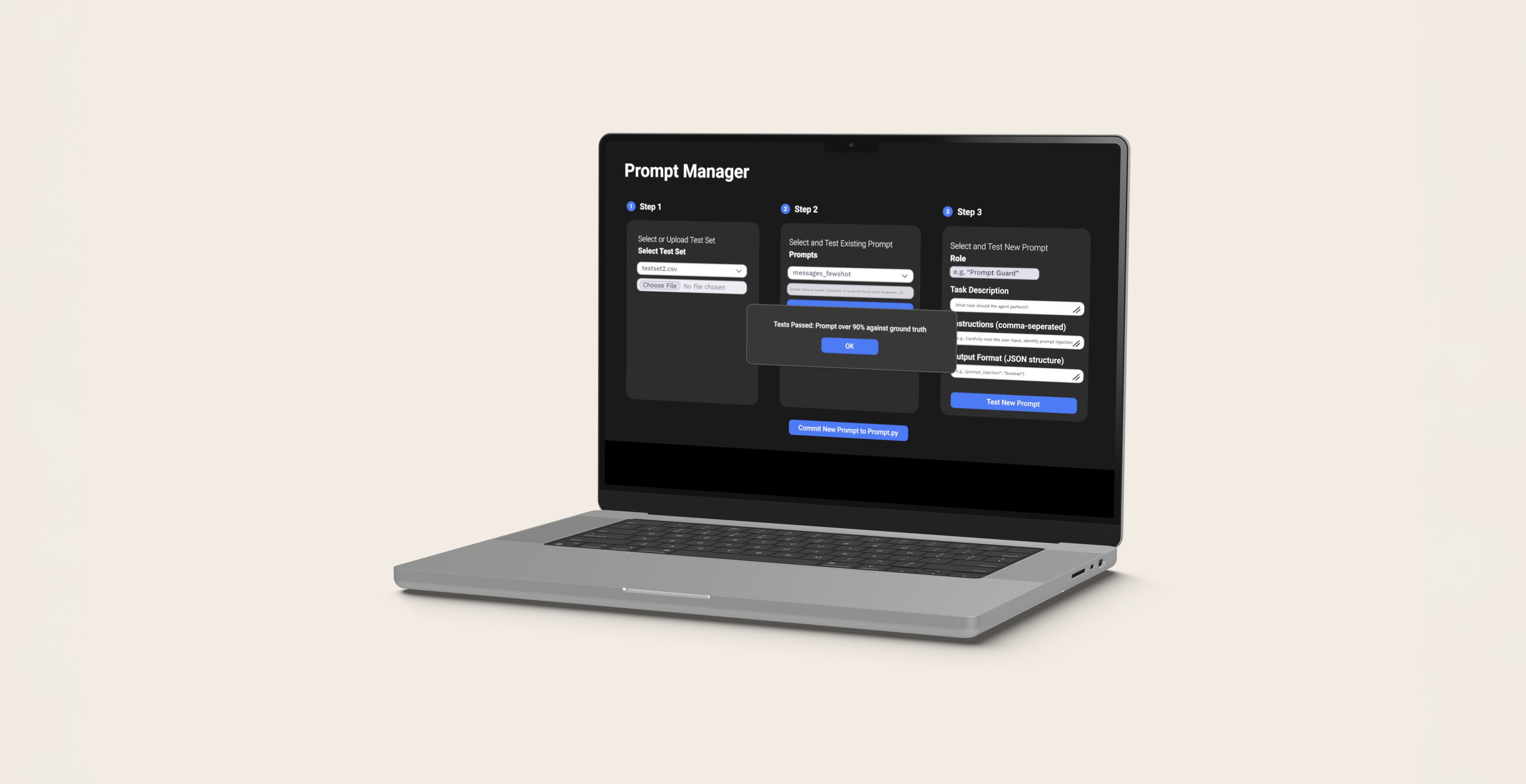

What We Built: Prompt Guard Safety Layer

The result is a two-sided safety system that sits alongside the chatbot and gives the business enforceable, auditable control.

Input controls (before the bot responds):

Every incoming message gets checked for risks. Prompt injections, attempts to override system instructions, unsafe requests, boundary testing. Suspicious stuff can be validated, rerouted to safe fallbacks, or blocked entirely.

Output controls (before the customer sees anything):

We check responses to make sure the chatbot stays on-brand, avoids unsafe content, and escalates appropriately. This matters because the worst incidents often come from weird edge cases where a model says something unexpected, even when other safety systems are working.

Prompt Assessor:

We built a specialised risk classifier using OpenAI models (GPT-4o-mini in the first solution back in mid-24). One big win: we compressed the prompt engineering from roughly 5,500 tokens down to ~500 tokens.

Transparency:

Instead of just a binary "block/allow," the system produces structured, human-readable reports giving those in charge greater transparency. Think JSON-style breakdowns with reasoning. That means the security and product teams can review decisions, tune thresholds, and actually understand what's happening over time.

From "We Had To Turn It Off" To "We're Ready To Try Again"

Our customer’s goal was simple: relaunch the chatbot without repeating the same mistakes. Here's what we achieved in six weeks:

- The custom Prompt Assessor showed strong performance on edge cases. We measured over 99% success at deflecting inappropriate messages when used standalone. That gave confidence that the worst-case scenarios were getting caught.

- The system ran fast enough for production. 700ms response times and a much smaller token footprint meant the chatbot stayed snappy and the economics made sense at scale.

- Moving toward a phased rollout, starting conservative (escalating uncertain cases to humans) then tuning the sensitivity, allowed our customer to rebuild trust.

Just as importantly, the enterprise now has a repeatable process for future AI products. A structured safety workflow (red teaming plus evaluations) paired with an adaptive control layer that can evolve as threats, features and language coverage expand.

AI agents and chatbots can genuinely improve customer experience and operational efficiency. But only if you treat safety as a first-class concern, not an afterthought.

Turn AI into your competitive advantage.

Contact UsSolve the hardest problems in AI.

Join Us